Whose Line Is It Anyway? Human Decentered Design in ChatGPT

What ChatGPT's product design can tell us about its creators, their ideas, and ourselves

I’m AIpilled. I’m anxious, amazed, obsessed. I can’t shake the feeling that we don’t live here anymore — that we’re in a different world now, the contours of which are still unfurling out beyond the horizon. What’s worse is that we are not alone.

To be human is to embody main character syndrome; we’ve been Carrie Bradshaw since we slithered out of that primordial stew. I have a nagging feeling that with AI we’ve inadvertently de-centered ourselves. Maybe we’re approaching a world where AI is the main character — and we merely intern for Carrie Bradshaw. Maybe the existential risk we’re so fired up about — Skynet, P(doom|AGI), etc. — actually starts with the very quiet rupture of our I into a We.

As I’ve spent more time using ChatGPT, I’ve thought a lot about our subjectivity and intersubjectivity — our shaky I. ChatGPT feels like the prime example of what I’ll call “human de-centered design” — a product designed human-second (and a play on IDEO’s “human centered design” framework). In ChatGPT, the tech is made flesh — anthropomorphized — and made equal to, or more important than, the end user.

Why again is ChatGPT so important? Well, ChatGPT is the fastest growing consumer product ever, reaching 100M users in two months. This week, they finally released their iOS app — which is incredibly fast, easy, and reliable — and which I think will prove to be a watershed moment in the mass adoption of AI. ChatGPT is now in your pocket, on your person, and it just works.

What can we learn from ChatGPT’s design

We can't ignore how a product’s design choices — intentional or not — influence how we interact with that product. ChatGPT is no different.

ChatGPT’s design is strikingly minimal, even by today’s standards, employing just a handful of typefaces, colors, and UI elements. People would think the form rudimentary if the content weren’t so powerful. But never forget that product design is intentional. It takes a lot of time and money to take an idea from a sketch to a global product able to serve millions.

ChatGPT’s minimal layout is in service of its strategy: OpenAI wants you to feel ChatGPT is a technology apart, without need for frills or distraction. The clean lines and white space underscore the technology’s power. And the core feature, of course, revolves around a chat interface. A chat interface is common enough, but that doesn’t make it benign. A chat UI implies a conversation, in this case between two entities: you, the asker, and ChatGPT, the answerer. The lack of instructions or disclaimers imbues the product with a sense of infallibility; it doesn’t need to be explained or caveated. Indeed, ChatGPT is mostly right except when it’s completely wrong.

The chatting construct belies the fact that ChatGPT is various. ChatGPT is not objective, but probabilistic. ChatGPT is not a singular source of knowledge, but a subset of human knowledge that’s been uploaded to the internet (and processed in one specific statistical way). ChatGPT is not pure computer processing, but the product of outsourced labor. ChatGPT isn’t just providing data, but hoovering your data. And ChatGPT is not just magic, but a business, one that’s run by ~10 white dudes, built by ~1000 engineers, and funded by the same VCs that brought us FTX and FB and the like. Ultimately, ChatGPT doesn’t provide answers, but speaks answer-shaped air. The chat UI is at once clever design, deceptive marketing, and wishful thinking.

Chat has an inherent anthropomorphic quality. But OpenAI takes this a step forward with the product’s interactions. ChatGPT replies to you to the way a human would. It looks like it’s actually typing — the cursor moves in fits and starts and takes time to finish its answer.

It can take quite a while for ChatGPT to return a response, so much so that users can tell it to “stop generating” if the answer is moving in the wrong direction. To make the product look like it’s typing is actually absurd; these services operate in fractions of a second. But the effect, again, heightens the product strategy. ChatGPT’s answers feel like they’re considered. The answers feel certain — and not like the inherently uncertain calculations that they are. As you use the product more, these subtle cues make you believe the service is most trustworthy than it is.

As someone who builds apps for a living, I would guess this typing design choice actually evolved from necessity. Generative AI applications require expensive processing (“compute”) that is slower than many other consumer products. You’ll notice that image generators like Midjourney are especially slow. (In this way, it feels like we’re living in the dial-up phase of the AI revolution.) But even so, ChatGPT could have obscured the processing time with a spinning wheel or "loading…” text or anything else. The anthropomorphized typing is a choice. (It’s also kind of goofy because while chat apps might show you someone is typing, they won’t render the text in real-time like that.)

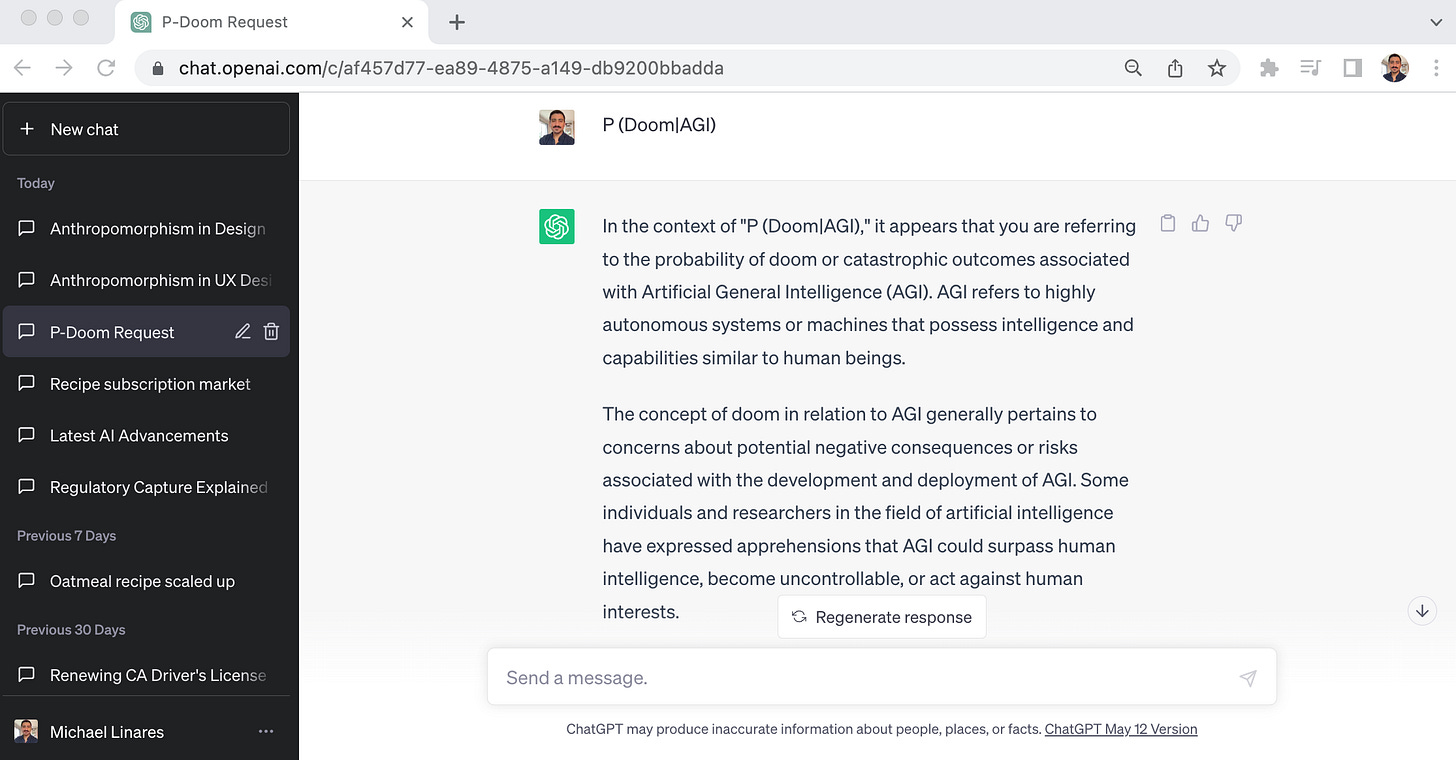

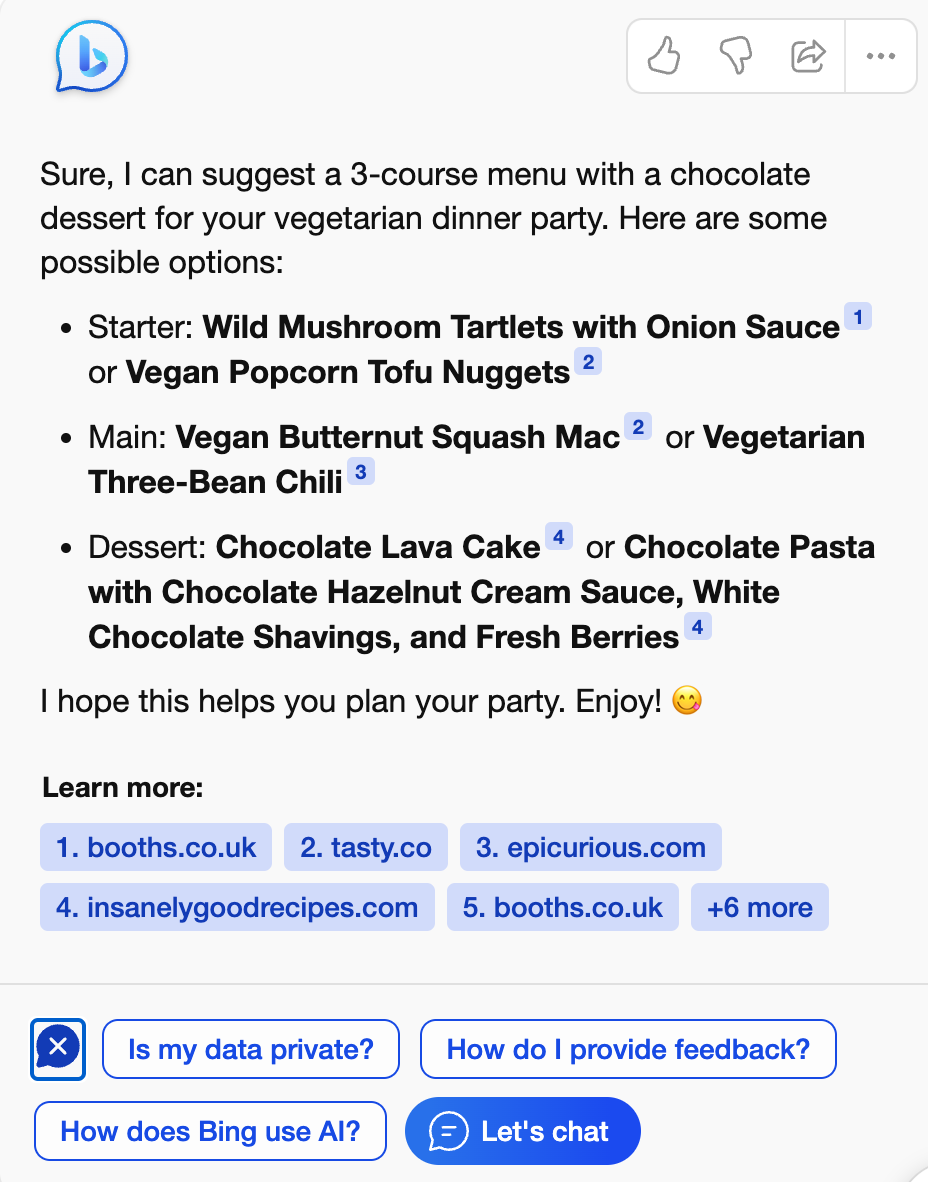

Beyond the anthropomorphic touches, the product does little to flag risks or otherwise highlights its own fallibility. There is a CYA legal disclaimer — in their design system’s smallest typeface — on desktop: “ChatGPT may produce inaccurate information about people, places, or facts.” The disclaimer doesn’t say more or link off to additional information. You might run into warnings if you ask for dangerous information (e.g., how to build a bomb) or for timely content (“ChatGPT is only trained through September 2021”). But all that feels tacked on. If the company were really invested in minimizing harms, it would have UI that foregrounds warnings. It could explain how its models produce misinformation. It could identify the parts of answers that are most disputed. Take a look at Bing:

Notice how Bing cites sources. The citations underscore that the data is crowdsourced. Users can look at sources and judge for themselves how much credibility to assign them. Bing also provides answers to common questions (“is my data private”) right there in the interface. Yes, it’s clunky, but it’s honest.

ChatGPT’s devil-may-care design reflects the ideology advanced by Sam Altman and the OpenAI. Altman often speaks about his anxieties around AI and AGI. He publicly frets about the nonzero chance his technology will destroy humanity… yet he continues to develop newer and more powerful forms of his AI engine. He goes before Congress asking to be regulated… without considering self-regulation. The product flags that some of the information may be wrong… but it’s still open for business, still taking all your questions.

By the way, On the Media did a great episode this weekend on the AI regulatory hearings:

ChatGPT’s mobile app is more insidious

ChatGPT’s iOS app is more of the same but so much more dangerous because it works beautifully and will soon be ubiquitous.

The app defaults you into one conversation, and tries to keep you there (rather than asking you to start new chats, as on web). The legal disclaimer is totally gone, and there are no other disclaimers in its stead. The two most notable features further the app’s anthropomorphism.

First, OpenAI added a haptic effect so that you can literally feel ChatGPT typing its responses. Unfortunately, it’s pretty pleasant. It takes the dopamine hit of receiving a text message or notification and doubles it. Is ChatGPT in the room with you right now? Yes, it really is. I’ll freely admit that the haptic feedback is as genius as it is sinister. It somehow makes the experience more satisfying and more human-like without tumbling into the Uncanny Valley.

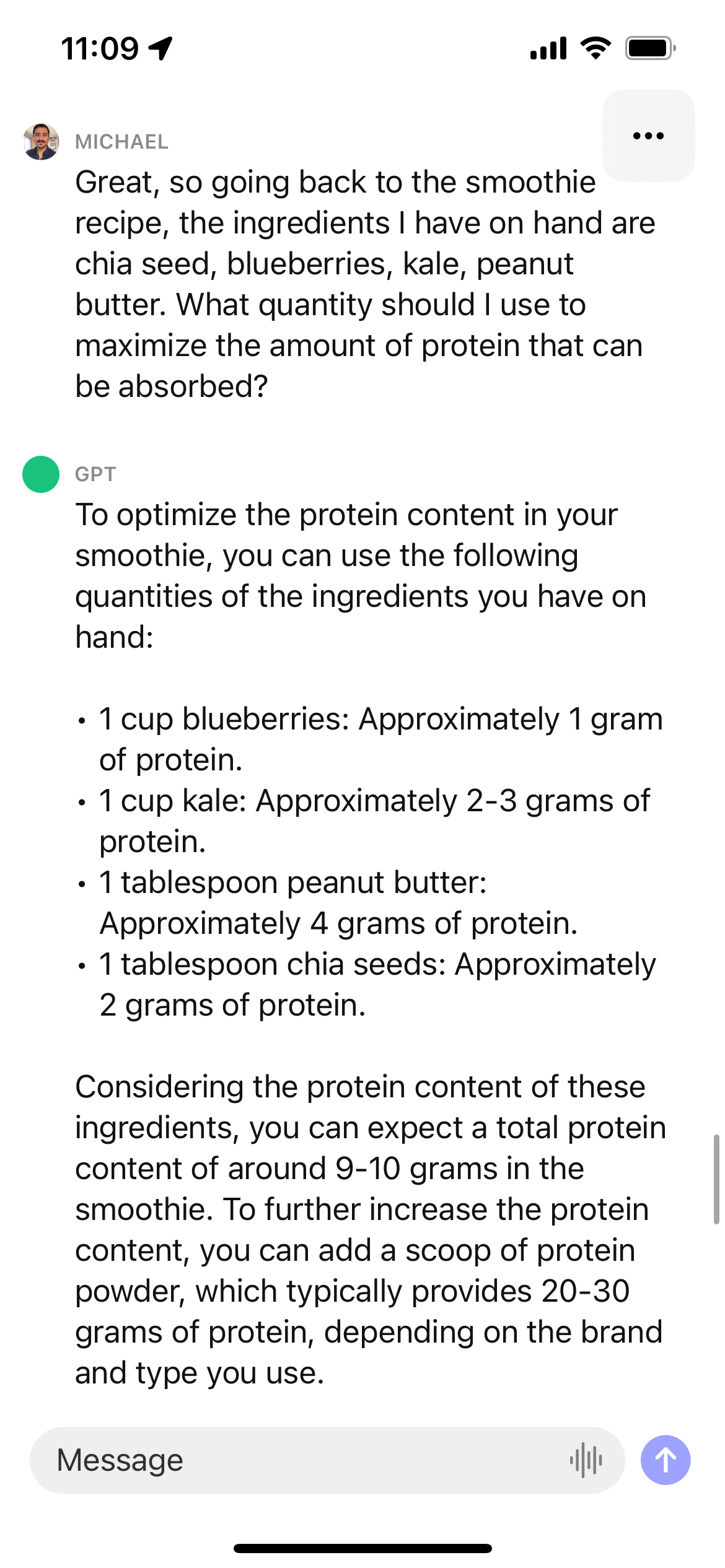

The second addition is voice. The only other new button in the interface is a microphone for voice input. I used it to make a smoothie this morning, and it worked like a charm. You click the button and speak into your phone. ChatGPT quickly transcribes what you said, and gives you the option to edit it, though you really don’t need to (it’s gotten good). After two or three responses, I found myself settling into an actual “conversation.” I went on a long digression about protein absorption (how much? how often?). ChatGPT indulged me and reassured me that I should aim for 30 milligrams before suggesting a recipe tailored to the ingredients I had on hand.

I was surprised how easily I adapted to voice input. The difference between ChatGPT and the voice technologies of yore is that it actually works. ChatGPT can hear you and return a helpful, multifaceted answer; essentially, ChatGPT finally delivers on the promise of voice interfaces. (This coming too late for the 10k employees laid off from Amazon’s Alexa division this year.)

I’d bet that these voice capabilities will accelerate users’ adoption of ChatGPT and make them more comfortable creating complex queries. Consumers will unlearn the staccato language of Google search and instead talk to ChatGPT as they would a friend. Again, is ChatGPT in the room with you right now? Regrettably, yes.

Taken together, these two features push ChatGPT forward — and push humans to the side. If ChatGPT’s design is successful, it will continue to feel more human, and we will continue to use it, training its algorithms and volunteering our own data in the process.

What’s the problem with human-like AI?

Now I’m not saying that ChatGPT isn’t an incredible technology. It is. And as much as I’m a skeptic, I’m an optimist, too. I believe this technology with augment our abilities and help humanity advance. Unless, of course, AI decides it’s easier to thrive without us.

It’s also worth interrogating anthropomorphism at scale, which is a powerful shift we don’t yet understand. We know that humans easily form relationships with each other, with pets, and with tech. We also that the history of technology is riddled with unintended consequences. The Washington Post reported a few months ago that users of Replika AI formed romantic relationships with its chatbot (“ladies and gentlemen… HER”). The company decided to block the product from getting intimate, which dismayed users. Similarly, we know from our recent political history that people will willingly believe what they read online if it confirms their worldviews. This dynamic gets dangerous when you put people in contact with people-like products. Users start to trust something that feels human, but isn’t. That product might provide bad advice or false comfort or misinformation. That product is also incentivized to keep the user doing things that benefit it — like uploading personal data. Keep in mind that these products are run by businesses with profit motives. More often than not, these businesses aren’t incentivized or resourced to mitigate harms at scale. Facebook, with all its billions of dollars, never managed to “solve” content moderation — or to prioritize it. It’s all starting to give HAL-9000. XML.