Oops! ... I did it with GAN: Living in Generation AI

Unpacking the magic and pitfalls of generative AI, with a case study on Midjourney

Every time I sit down to write about AI, I feel I’m already behind. So much is changing and so quickly. And yet it’s clearly here to stay. We’re living in Generation AI for better or worse. What we’re seeing this year—this month, this week—is the fast mass consumerization of AI. In this post I want to take a look at “generative AI,” the term for this category of tools that use machine learning and large language models to produce images, videos, text, code, and audio. The highest profile ones turn text into images (DALL-E, Midjourney, Stable Diffusion), text into more text (GPT-3), and text into code (GitHub Copilot). This week I’ll walk you through two popular tools.

I’m particularly interested in generative tech as a category because these tools are bringing high-powered algo-driven processing power to the masses — consequences be damned. Just last month someone created an app, Draw Things, that runs Stable Diffusion locally, on your phone (a wild technical feat). This gives me pause because we still haven’t learned our lessons with the current crop of algorithms that have so thoroughly dominated our lives — what Cathy O’Neil calls “weapons of math destruction.” TikTok and other B2C products use algorithmic content to keep users agitated and engaged. Banks and police departments use B2B products to determine who they can trust (hint: they’re usually white). In most cases we don’t understand how these algorithms work or what the long-term consequences will be (as with political polarization). We know by now that these algorithms often regurgitate and repackage our biases. Generative tech is no different. An added dimension is that generative tech requires massive amounts of processing power (“compute”) and electricity — invisible to the end user — that results in carbon emissions and requires mining the earth, building infrastructure, and loads of actual human labor.

Letter of Recommendation: Make some images with DALL-E and Midjourney AI

“Any sufficiently advanced technology is indistinguishable from magic.”

Arthur C. Clarke

The text-to-image stuff feels like magic, and you just have to try it. I spent a few hours with various tools and even I, a technologist, found it a bit disorienting. Here’s a quick rundown of how you can get started, with two options.

These text-to-image tools only require that you write a prompt; over time, it’s prompting that is the main skill you develop. Each platform takes your prompt and spits out something unique that is informed by their data corpus, defined rules (DALL-E doesn’t render faces, for example) and most importantly, undefined logics. The algorithms don’t tell you why they make the associations they do—that’s part of the mystery and magic of it.

Option 1: DALL-E 2

If you have just a few minutes, you should go with Open AI’s DALL-E 2 (a play on Dolly the sheep and WALL-E). It takes one minute to sign up here and it opens up onto a very clean interface that is incredibly easy to use. I love the “surprise me” feature, an actually viable and serendipitous version of Google’s “I’m Feeling Lucky” feature (why is that still on their search page, by the way?).

Even if you aren’t creating something, these images can inspire you. These particular renders are very reminiscent of Saul Steinberg, one of my favorite illustrators, and sent me down a rabbit hole trying to find some of his art. And yet, there’s something very off here. Look at Steinberg’s clever illustration of gallery-goers below: how does he convey such feeling and interiority with so few strokes? DALL-E’s, meanwhile, lack a ghost in the machine.

Option 2: Midjourney AI

Okay, if you have like 15-30 minutes to spare, try Midjourney. Midjourney is known for creating hyperrealistic renders with little prompting (“too easy” say some users). The experience is a little more involved, but it has a community-driven aspect that I appreciate. First, you sign up here. After logging into, you’re taken to the Midjourney Discord server (basically a semi-public Slack domain). And from there, you actually make prompts within specific channels. In this way, you’re creating in public, learning from others and vice versa. Earlier this year, amidst the Twitter meltdown, I made this terrifying render of a bird fighting a zombie apocalypse using Midjourney:

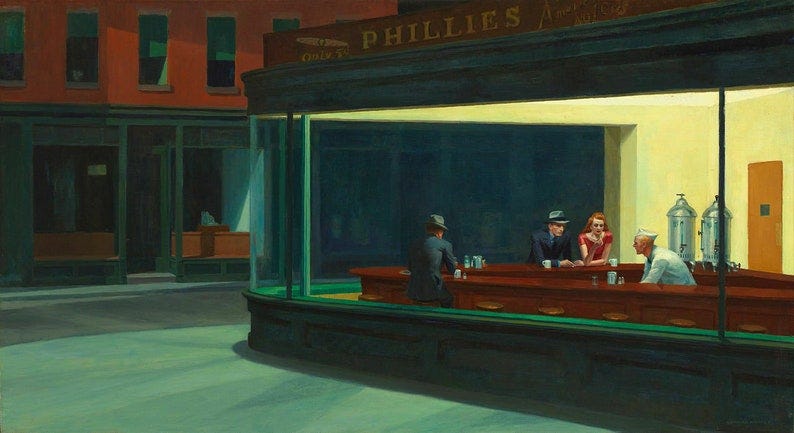

And today, I logged into the Discord server, and immediately saw this haunting render, instantly recognizable: “nighthawks mcdonalds”

I loved the idea and immediately started toying with the prompt. I liked the direction, but I wanted an image that came close to that ineffable desperation in Hopper’s original.

The way Midjourney works, you type your prompt right into the chat, like a CLI. I tried a series of iterations, finally landing on /imagine nighthawks mcdonalds, single person eating alone, wide angle, in style of edward hopper. This was the best result, which is good, but just an approximation of the real thing.

I learned a lot with this exercise. It let me grasp the magic of generative tech, and its inherent limitations as well. I realized that the activity is inherently probabilistic—which is not how we typically think of or experience creation. To land on an image, you enter a few words and the blackbox spits something out; you’re basically rolling the dice. You can’t (yet) will these products to create compositions to your specifications. More of a woodblock than a paintbrush.

I found myself again feeling that the images lacked soul — a human subject’s unique point of view that can by some unknown magic strike a universal chord. I know the idea sounds a bit romantic, but I find it genuinely hard to build an emotional connection with these images. ChatGPT had this to say: “Ultimately, the question of whether images created by AI lack a soul is subjective and depends on the viewer's perception and personal experience. While AI-generated images may not have a human touch, they can still be appreciated for their unique qualities and the creative possibilities they bring to the table.” Convenient!

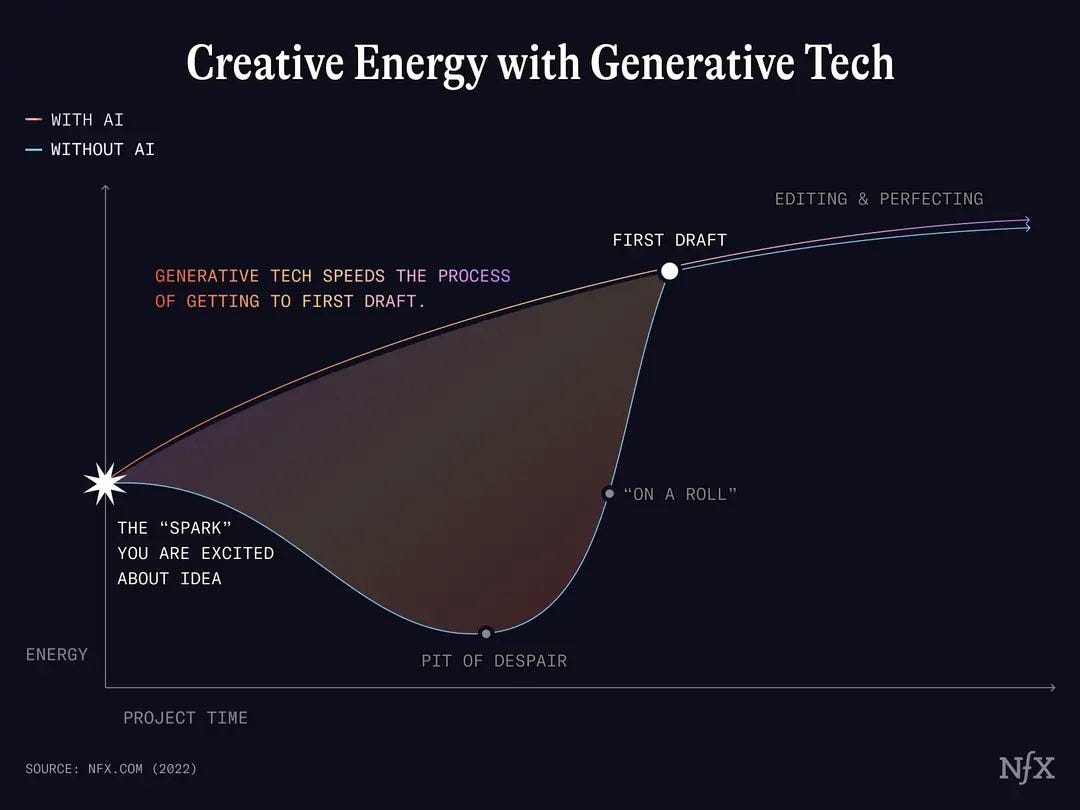

But maybe it is more helpful to understand these images as creative tools, and not ends in themselves. That’s essentially what the CEO of Stable Diffusion, Emad Mostaque, said in a Hard Fork segment a few months ago. He sees Stable Diffusion as a tool that can decrease the time from your idea to the first draft (see below). I buy that, to an extent. It’s an awfully convenient argument that helps deflect critics’ claims that his tech is based on stolen IP, will displace real jobs, etc. (It’s a good interview, though I found most of what he said Pollyannish.)

There is truth in this, though. I asked three artists friends what they thought of generative, and all three informed me it was a key part of their creative process. They use it to create sketches on the fly (from their phones); to draft concepts for advertising campaigns; and to source new sounds while they write songs. Pretty neat.

I originally wanted to spend more time on the hazards inherent in generative in AI, but I am out of space, and maybe I don’t need to be a killjoy with every post. I hope you’ll try one of these products and let me know what you think. XML